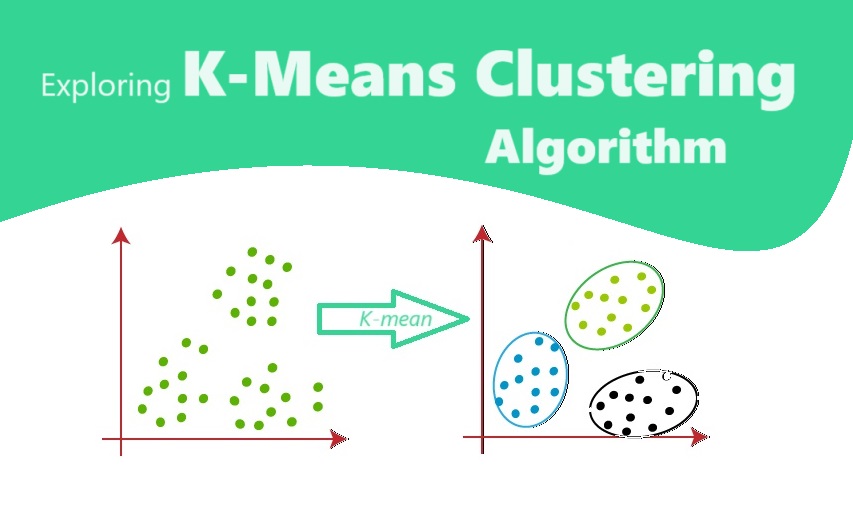

K-Means clustering is a popular unsupervised machine learning algorithm used for grouping similar data points together. It is widely used in various fields such as data analysis, image segmentation, customer segmentation, and anomaly detection. In this article, we will explore the concept of K-Means clustering, its algorithm, and its applications.

What is K-Means Clustering?

K-Means clustering is a technique that divides a given dataset into K clusters, where each data point belongs to the cluster with the nearest mean. The value of K is determined by the user and represents the number of clusters desired. The K-Means clustering algorithm aims to minimize the sum of squared distances between the data points and their respective cluster centroids.

The K-Means Clustering Algorithm

The K-Means clustering algorithm can be summarized in the following steps:

- Randomly initialize K cluster centroids.

- Assign each data point to the nearest centroid based on the Euclidean distance.

- Recalculate the centroids by taking the mean of all data points assigned to each centroid.

- Repeat steps 2 and 3 until convergence or a maximum number of iterations is reached.

The algorithm converges when the centroids no longer change significantly or the maximum number of iterations is reached. The final result is a set of K clusters, each represented by its centroid.

Advantages and Limitations of K-Means Clustering

K-Means clustering has several advantages:

- It is simple and easy to implement.

- It is computationally efficient, making it suitable for large datasets.

- It works well with numeric data and can handle a large number of dimensions.

However, K-Means clustering also has some limitations:

- It requires the number of clusters (K) to be specified in advance, which can be challenging.

- It is sensitive to the initial placement of centroids, which can lead to different results.

- It assumes that clusters are spherical and of equal size, which may not always be the case in real-world data.

Applications of K-Means Clustering

K-Means clustering finds applications in various domains:

Data Analysis

K-Means clustering is widely used in data analysis to discover patterns and relationships within datasets. It helps in identifying groups of similar data points, which can be useful for market segmentation, customer profiling, and anomaly detection.

Image Segmentation

In image processing, K-Means clustering is used for image segmentation, which involves dividing an image into different regions or objects. By clustering similar pixels together, it enables image recognition, object tracking, and image compression.

Recommendation Systems

K-Means clustering is utilized in recommendation systems to group users or items based on their preferences or characteristics. This enables personalized recommendations, such as suggesting similar products or movies based on user behavior.

Genomics

In genomics, K-Means clustering is employed to analyze gene expression data and identify patterns or clusters of genes with similar expression profiles. This aids in understanding gene functions, disease classification, and drug discovery.

Basic implementation of the K-Means algorithm in Python

Sure, here’s a basic implementation of the K-Means algorithm in Python along with an explanation of each step:

import numpy as np

import matplotlib.pyplot as plt

# Generate some random data points

np.random.seed(0)

X = np.random.rand(50, 2)

# Define the number of clusters

k = 3

# Initialize centroids randomly

centroids = X[np.random.choice(X.shape[0], k, replace=False)]

# Visualize the initial centroids and data points

plt.scatter(X[:,0], X[:,1], c='blue', label='Data Points')

plt.scatter(centroids[:,0], centroids[:,1], c='red', marker='x', label='Initial Centroids')

plt.title('Initial Data Points and Centroids')

plt.xlabel('X')

plt.ylabel('Y')

plt.legend()

plt.show()

# Define a function to assign each data point to the nearest centroid

def assign_clusters(X, centroids):

distances = np.sqrt(((X - centroids[:, np.newaxis])**2).sum(axis=2))

return np.argmin(distances, axis=0)

# Define a function to update the centroids based on the mean of the assigned data points

def update_centroids(X, clusters, k):

centroids = np.zeros((k, X.shape[1]))

for i in range(k):

centroids[i] = np.mean(X[clusters == i], axis=0)

return centroids

# Run the K-Means algorithm

max_iterations = 10

for i in range(max_iterations):

clusters = assign_clusters(X, centroids)

new_centroids = update_centroids(X, clusters, k)

# Check for convergence

if np.allclose(centroids, new_centroids):

print("Converged after {} iterations".format(i+1))

break

centroids = new_centroids

# Visualize the final clusters and centroids

plt.scatter(X[:,0], X[:,1], c=clusters)

plt.scatter(centroids[:,0], centroids[:,1], c='red', marker='x')

plt.title('Final Clusters and Centroids')

plt.xlabel('X')

plt.ylabel('Y')

plt.show()Explanation:

- Import necessary libraries:

numpyfor numerical computations andmatplotlib.pyplotfor visualization. - Generate random data points using

np.random.rand. - Define the number of clusters,

k. - Initialize centroids randomly by selecting

kdata points randomly from the dataset. - Visualize the initial centroids and data points using

plt.scatter. - Define a function

assign_clustersto assign each data point to the nearest centroid. - Define a function

update_centroidsto update the centroids based on the mean of the assigned data points. - Run the K-Means algorithm for a maximum number of iterations.

- Visualize the final clusters and centroids.

This code provides a basic implementation of the K-Means algorithm in Python for clustering data points into k clusters.

Conclusion

K-Means clustering is a powerful algorithm for grouping similar data points together. Its simplicity and efficiency make it a popular choice in various domains. By understanding the concept, algorithm, and applications of K-Means clustering, you can leverage its potential to uncover hidden patterns and gain valuable insights from your datasets.